SPARK Challenge 2026 — Event-Based Spacecraft Pose Estimation

Achieved 9th place globally at the AI4Space 2026 workshop (CVPR 2026) in Stream 2 by building a 6-DoF spacecraft pose estimation pipeline from event-camera data.

Project Overview

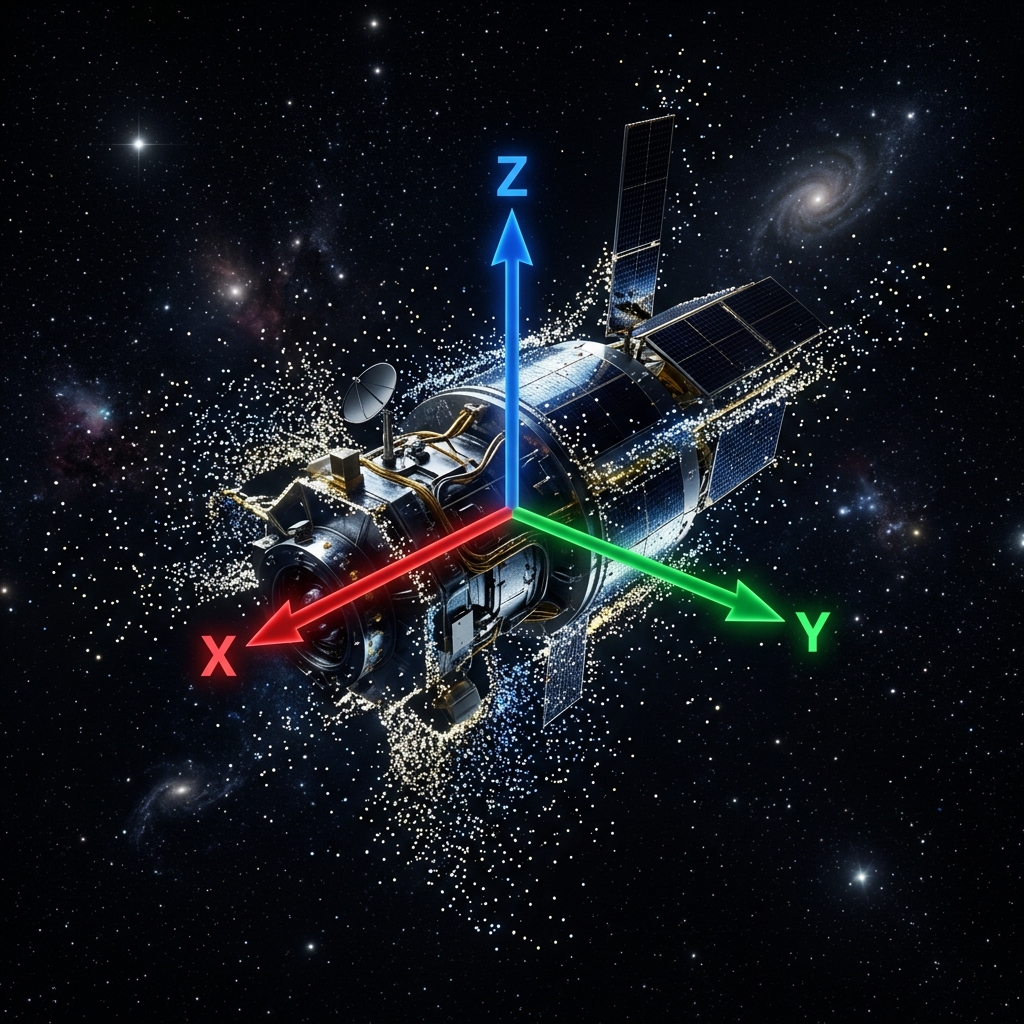

The SPARK 2026 Challenge (SPAcecraft Recognition leveraging Knowledge of Space Environment) is an international competition focused on spacecraft pose estimation, hosted at the AI4Space workshop at CVPR 2026. Stream 2 specifically targets event-based vision — using neuromorphic event cameras instead of traditional frame-based cameras to estimate the 6-DoF (position + orientation) pose of a spacecraft.

Event cameras capture per-pixel brightness changes asynchronously, producing a stream of events rather than frames. This makes them ideal for space applications where lighting conditions change rapidly and motion blur is a concern.

The SPADES Dataset

The challenge utilizes the SPADES (SPAcecraft Pose Estimation Dataset using Event Sensing) dataset, focusing on the Proba-2 satellite. It contains two categories:

- Synthetic Data: Generated using Unreal Engine (UE) and the ICNS event simulator (Blender). It features dynamic backgrounds (animated Sun and Earth) and contains 300 trajectories with 179,400 pose labels.

- Real Data: Collected at the Zero-G Laboratory (SnT, University of Luxembourg) using a scaled mockup of the Proba-2 satellite and a Prophesee Metavision EVK4-HD event vision sensor.

Technical Approach

Data Representation

The event stream was converted into 2-channel event frames (accumulated polarity surfaces), representing ON and OFF event counts. This transition from complex voxel grids significantly improved training efficiency and model convergence on high-motion trajectories.

Model Architecture

- Backbone: Advanced EfficientNet (B0, B3, B4) encoders integrated with Feature Pyramid Networks (FPN) for multi-scale feature extraction.

- Pose Head: Decoupled heads for translation and rotation, utilizing 6D rotation representations for smoother optimization.

- Loss Function: Combined SmoothL1 loss for translation and quaternion distance loss for rotation.

- Ensemble Strategy: A Hybrid Strategy combining the best translation experts (v7) with rotation experts (v15).

Key Results

- 9th place globally in Stream 2 at the CVPR 2026 AI4Space workshop.

- Implemented Spherical Linear Interpolation (SLERP) for temporal smoothing, reducing orientation jitter by over 15%.

- Achieved robust performance across both Synthetic and Real event data from the SPADES dataset using Density Augmentation.

Skills & Technologies

PyTorch, EfficientNet, Feature Pyramid Networks (FPN), SLERP, 6-DoF Pose Estimation, SO(3) Geometry, Event Cameras, Computer Vision